Unlimited possibilities

Leading Edge AI Hardware. Superior Partners.

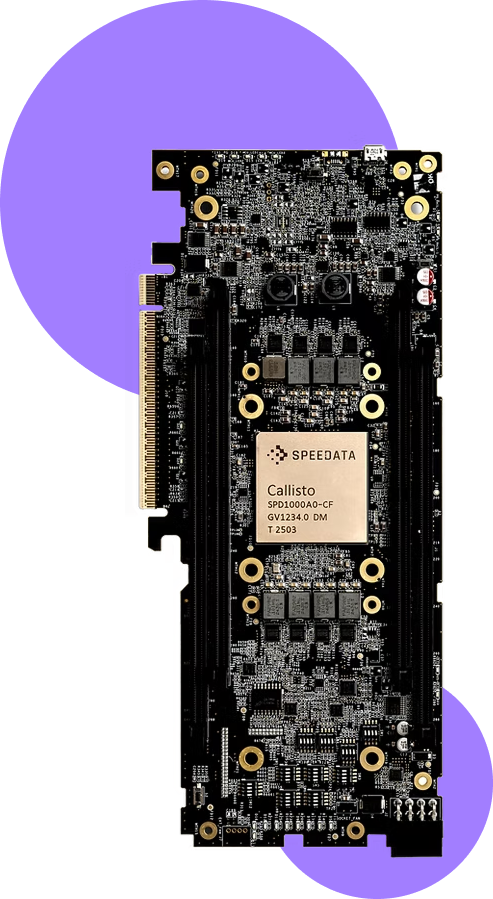

Speedata APU

The World’s First ASIC Processor for Analytics

Eliminate Apache Spark processing bottlenecks. Handle over a billion rows per seconds in hardware.

Early adopters report up to 50× faster Spark performance versus CPUs, with a roadmap to 100× gains. Compared to GPUs, the Speedata APU delivers an average 91% capex reduction, along with projected 94% space savings and 86% lower power consumption.

The Speedata C200 is the first analytics accelerator card powered by the Speedata APU, delivering breakthrough performance for Spark and high-bandwidth data processing, with easy PCIe integration into standard servers.

Avoid delays, missed deadlines, and abandoned analytics

Accelerate time to insight by 100x

Reduce energy consumption and space by up to 95%.

Silicom Connectivity Solutions

Built to Service OEMs

Silicom’s multi-port and bypass adapters, smart adapters, and appliance platforms improve throughput, reduce latency, and accelerate performance for cybersecurity, network monitoring, traffic management, application delivery, WAN optimization, high-frequency trading, and other data center and enterprise applications. Its high-density networking, high-speed switching, and offload/acceleration solutions – powered by advanced silicon and FPGA technologies – are designed to scale cloud infrastructures efficiently.

With 300+ products and extensive customization capabilities, Silicom addresses critical connectivity bottlenecks across virtualized cloud, big data, IoT, and NFV/SDN/SD-WAN environments. These solutions are widely deployed by leading cloud providers, service providers, telcos, and OEMs in data centers and at the network edge, as adapters and as stand-alone virtualized CPE platforms.

“One-Stop Shop” solutions for all connectivity challenges

Technological brilliance with unmatched expertise in Intel technologies

Pre-sale engineer-to-engineer technical consulting

Top-performing AI processors

Hailo delivers breakthrough AI processors purpose-built to power high-performance deep learning applications at the edge.

Its solutions are designed for the new era of generative AI on edge devices, while also enabling advanced perception and video enhancement through a broad portfolio of AI accelerators and vision processors.

Hailo-10 M.2 module

Hailo-10H M.2 AI Acceleration Module is a compact, high-performance AI accelerator delivering up to 40 TOPS for edge and PC systems. Designed for efficient generative AI and vision inference, it offers excellent power efficiency in a standard M.2 form factor. Compatible with major AI frameworks and operating systems for seamless integration.

- High-performance processing of vision and generative AI models

- First to market AI accelerator with generative AI capabilities

- Robust and mature software suite, supported by the world’s largest edge AI community

- Featuring the Hailo-10H AI accelerator with 40|20 TOPS (INT4|8)

- Second generation of Hailo’s market leading AI accelerator

- Industrial grade, supporting -40°C to 85°C

- Best-in-class power efficiency; Consumes 2.5W (typical)

Hailo-8 M.2 AI Acceleration Module

Delivering data center class performance

to edge devices.

- Powered by 13 Tera-Operations Per Second (TOPS) Hailo-8L AI processor

- Enabling real-time, low latency, and high-efficiency AI inferencing on edge devices

- Fast time to market using a standard M.2 form factor module, with key B+M & key A+E

- Best-in-class power efficiency

- High cost-efficiency (TOPS/$) compared with existing solutions

- Supporting extended temperature range of -40°C to 85°C

- Robust software suite supports state-of-the-art deep learning models & applications out-of-the-box

- Scalable: Enabling simultaneous processing of multi-streams & multi-models

- Future-ready: software compatibility when migrating to Hailo-8 for more powerful AI

Hailo-8 Century High Performance PCIe Card

The high-performance Century PCIe cards enable deep neural network inferencing in real-time with low power consumption for a broad range of market segments and applications on any platform with a 16-lane PCIe slot.

- Delivering 52-208 Tera Operations Per Second (TOPS)

- Supporting industrial temperature range of -40°C to 85°C

- Best-in-class power efficiency, at 400 FPS/W in ResNet50 benchmark model

- Highest cost-efficiency, starting at $249

- Enabling real-time, low latency, and highly efficient concurrent processing of complex pipelines with multi-streams and multi-models

Robust software suite supports state-of-the-art deep learning models & applications out-of-the-box

Ready to dicuss your next project?

Contact us

- Poland, Rybnik

- +48 880 992 280

- rafal.zydek@nghs.eu